A child can count better than Frontier AI Models

If Your “Frontier” Model Can’t Count, You Don’t Have Reasoning. You Have Vibes.

Here’s a truth that makes people mad:

A 128K context window doesn’t mean your model can count.

It means it can read more while still doing approximate attention math.

So when you ask an LLM to aggregate a long document—count categories, sum totals, tally events— you’re not testing “reasoning.”

You’re testing whether it can do exact arithmetic using a tool that isn’t exact.

That’s why OOLONG hurts.

Here’s the cheat code: don’t let the LLM count.

Stop Asking LLMs to Aggregate. Make the CPU Count.

🐉 The Counting Dragon

This dragon shows up everywhere:

- “How many customers mentioned churn risk?”

- “How many times does the term X appear?”

- “What’s the total revenue by segment?”

- “Count the number of records that match criteria Y”

A pure LLM answer often feels right… until you verify it.

And in production, “close enough” is wrong.

The principle (one line)

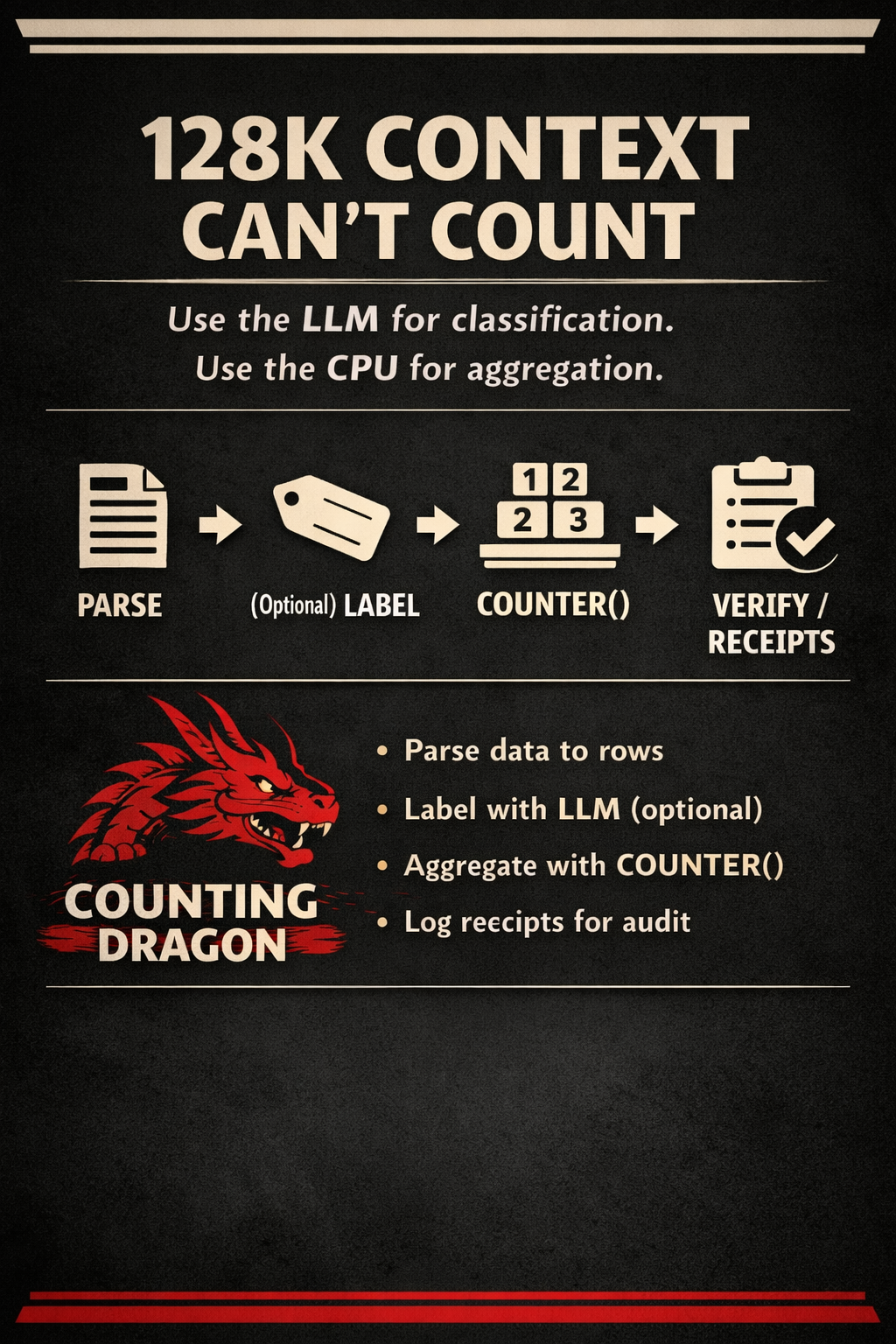

Use the LLM for classification. Use deterministic code for aggregation.

That’s it.

That’s the whole trick.

The Counter-Bypass Protocol (Stillwater style)

This is the protocol I use to beat long-context aggregation traps:

1) Parse → structured rows (deterministic)

Turn the big blob into rows/fields.

2) (Optional) LLM labels each row

Only if the classification requires fuzzy semantics.

3) CPU aggregates (required)

Use Counter(), sum(), exact integers/fractions.

4) Verify with executable markers

Emit counts + a reproducible audit trail:

- sample rows that match each bucket

- hashes of inputs/outputs

- “if you rerun, you get the same result”

LLM is the interpreter. CPU is the accountant. Never swap those.

A simple example (that scales)

Let’s say you have 5,000 support tickets and you ask:

“How many are billing vs bugs vs feature requests?”

Bad approach:

- paste all tickets into the model

- ask for totals

- trust the numbers

Disciplined approach:

- store tickets as rows

- ask LLM to label each ticket (billing/bug/feature) with a confidence tag

- use CPU to count labels exactly

- output: totals + 20 example tickets per category + rerun command

Now your result isn’t “a vibe.”

It’s a report you can audit.

Why attention fails at counting (plain-English)

LLMs don’t “loop” over tokens the way your code loops over rows.

They compress patterns, and compression is approximate.

Counting is not pattern-completion.

Counting is enumeration.

So the fix is not “think harder.”

The fix is: use the right tool.

The MrBeast-style challenge (viral loop)

Let’s do a public sparring test.

Challenge: paste a long chunk of text (or a dataset summary) and ask for an exact count or aggregation.

I’ll reply with:

- the minimal parse schema

- whether you need LLM classification (or not)

- the exact

Counter()aggregation strategy - what receipts you should log so a skeptic can rerun it

Comment: COUNT and I’ll drop the “Counter-Bypass Card” you can paste into your agent prompt.

Bonus: the Tower framing (makes it memorable)

This is Floor 2: Foundation in the Stillwater Tower.

If your agent can’t beat the Counting Dragon, it shouldn’t be touching:

- analytics

- finance

- ops dashboards

- compliance summaries

- incident postmortems

Because those domains punish “almost.”

The point (one line)

Long context isn’t memory. It’s a bigger whiteboard. If you want correctness: determinism + receipts.

Endure. Excel. Evolve. — Phuc Vinh Truong